The augmented reality aesthetic layered over traditional murim visuals is such a specific creative choice and it almost should not work. But it does. Completely.

Esme-Romero

The emperor's final message plotline is what is keeping me most invested. What does a dead emperor have to say to a crumbling empire 120 years later and why does it still matter?

Return Of The Demonic Instructor Perfectly Blends Murim And Sci-Fi

When you think of murim manhwa, your mind probably conjures images of ancient martial arts sects, internal energy cultivation, and warriors battling with swords and bare fists in historical settings. Science fiction elements like outer space invasions, advanced technology, and apocalyptic scenarios belong to completely different stories. Return of the Demonic Instructor takes these seemingly incompatible genres and weaves them into something genuinely innovative. Released on Webtoon in January 2026, this series arrived at the perfect moment when readers were hungry for fresh takes on established formulas. The premise alone sounds wild. A murim world gets invaded by demons from outer space, forcing martial artists to adapt centuries-old techniques to fight extraterrestrial threats. Then throw in regression, magic systems, and apocalyptic survival elements for good measure.

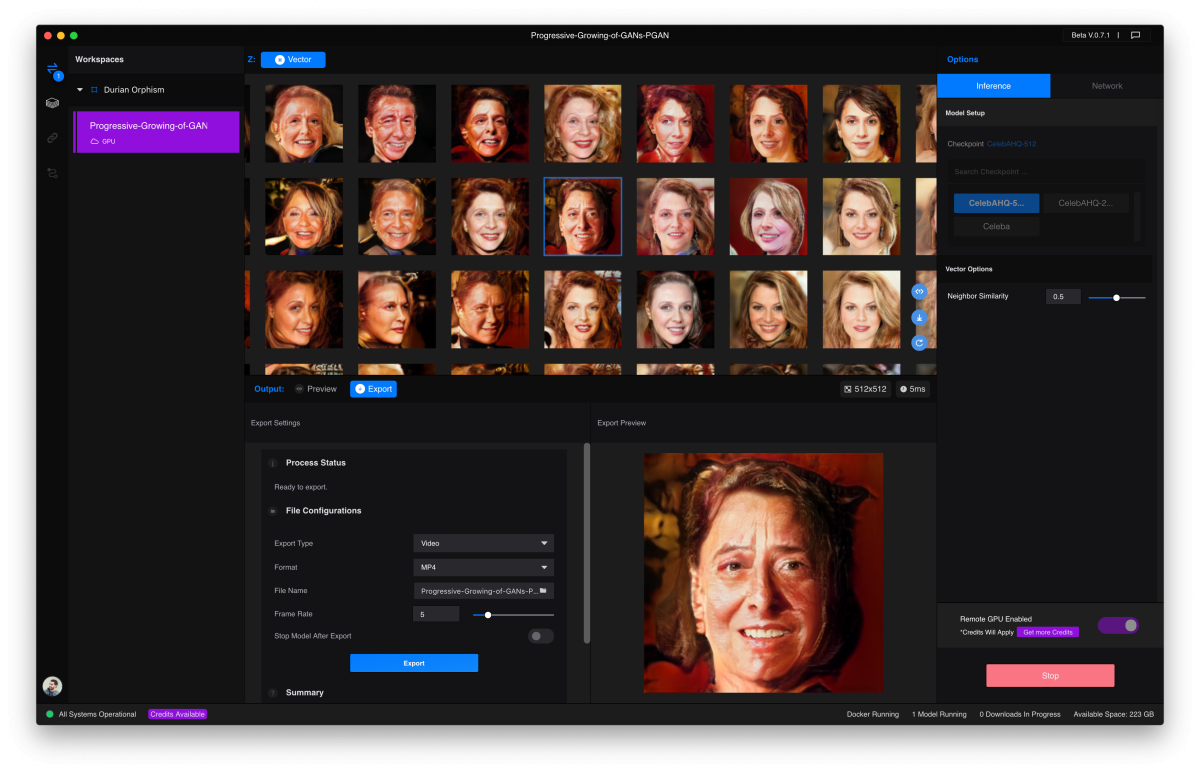

Nano Machine Has The Best Combat Art In Manhwa Right Now

In a medium filled with talented artists producing stunning work, making a claim about any series having the "best" art feels bold. Yet Nano Machine consistently delivers combat sequences so fluid, detailed, and visually innovative that even readers who don't typically care about martial arts stories find themselves captivated by the sheer spectacle on display. The series combines traditional murim aesthetics with futuristic sci-fi elements, creating a unique visual identity that stands apart from typical cultivation manhwa. The nano machine implanted in protagonist Cheon Yeo-Woon's body doesn't just give him power. It becomes a storytelling device that allows the artist to visualize techniques, energy flows, and combat analysis in ways other series can't replicate.

Beating Google And OpenAI At Video Generation With A 1,247 Elo Score

The AI video generation race just got a clear winner. Runway Gen-4.5 topped the Video Arena leaderboard with a 1,247 Elo score, surpassing both Google Veo 3 and OpenAI Sora 2. For those unfamiliar with Elo ratings, this is the same system used to rank chess players and competitive games. A higher score means more wins in head-to-head comparisons. When real users compare videos side by side without knowing which AI generated them, they consistently choose Runway's output. Runway didn't start as an enterprise video tool. It began as a playground for artists and filmmakers who wanted to experiment with AI-generated visuals. The early versions produced fascinating but inconsistent results. Sometimes you'd get stunning cinematic footage. Other times you'd get distorted motion and unrealistic physics. Gen-4.5 changed that equation by achieving breakthrough consistency in motion quality and physical accuracy.

This is the kind of tool that sounds great in the pitch and then causes a compliance crisis eighteen months after deployment when nobody thought to ask about data governance. Ask first.

One thing the article missed is what happens when the AI gets the summary wrong. A 95% accurate transcript means roughly 1 in 20 words is off. In a 30-minute meeting that could mean a meaningfully distorted action item or a misquoted decision. Someone still needs to verify.

The background task automation feature changes the workflow more than anything else. Starting a task before a meeting and coming back to a finished feature is a completely different relationship with your tools than traditional development.

152% Growth By Making Every Employee Their Own Video Production Studio

While Synthesia leads in revenue, HeyGen leads in customer acquisition momentum with 152% year-over-year growth in mid-market adoption. That explosive growth rate allowed HeyGen to close much of the customer count gap by late 2025. The company is winning by making avatar video accessible to smaller teams and individual creators who cannot afford enterprise contracts but need professional video capabilities. HeyGen positioned itself for small and medium businesses, marketing teams, content creators, and solo entrepreneurs rather than enterprise learning and development departments. This market segment values affordability, ease of use, and creative flexibility over governance features and advanced integrations. Average contract values are roughly one-third of Synthesia's, reflecting this different customer profile.

Nobody ever posts the failure stories though. The channels that went all in on AI avatars and lost audience trust when they disclosed it, the agencies whose clients pulled back when they found out. Survivorship bias makes every case study look cleaner than reality.

As a software developer I have complicated feelings about this. On one hand it could meaningfully improve the security of code I ship. On the other hand the same capability that patches my code can be used to attack systems I depend on if it ever escapes the restricted group.

The Iran development, if it proves real and durable, is genuinely one of the most important real-world use case stories since El Salvador. Nations using Bitcoin for sanctions evasion is one thing. Nations using it for legitimate oil settlements is another entirely.

The Anthropic news lands in the same week they are fighting the US government in court over something separate. That company is dealing with a lot of strategic fronts simultaneously. Can they really afford the attention bandwidth for a chip program on top of everything else?

Meta’s Muse Spark Moment And The Beginning Of The AI Super App Era

Meta has just had one of its most important AI moments yet and the early signals are hard to ignore. Following the launch of its newest AI model Muse Spark, the company’s standalone Meta AI app surged dramatically in popularity, hinting at a much larger shift that is beginning to take shape. The release is particularly significant because it marks the first major AI model rollout under Alexandr Wang, who joined Meta to reboot its AI strategy. This is not just another incremental update. It represents a more aggressive and focused push into the AI race. According to data from Appfigures, Meta AI jumped from number 57 to number 5 on the U.S. App Store within a day of the launch. That kind of movement rarely happens without a strong underlying pull from users. It signals not curiosity but intent.

I work in digital safety for a nonprofit and the Internet Watch Foundation angle is important. Child protection organizations have genuinely been begging platforms to find solutions that allow content scanning without full E2EE. TikTok is not inventing this concern.

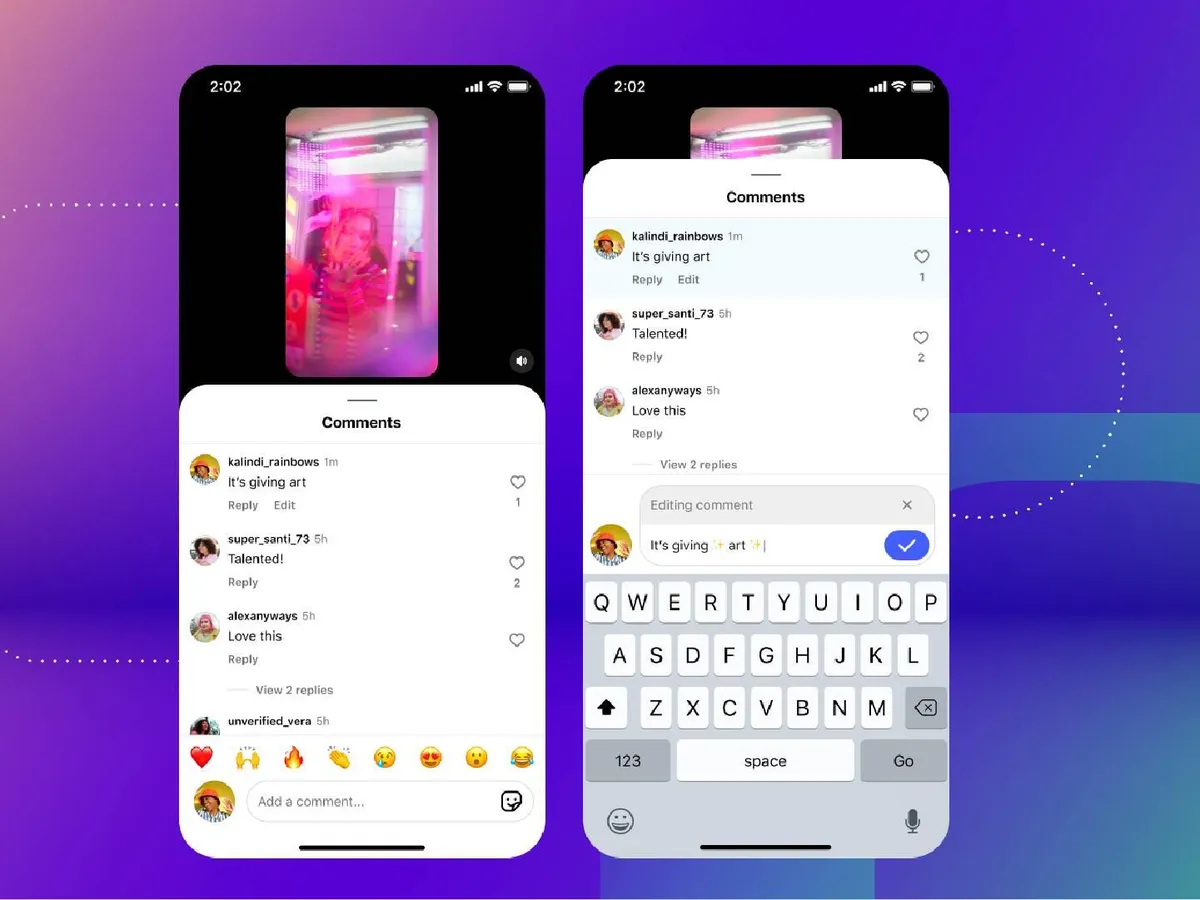

Instagram Finally Lets You Edit Comments And It Signals A Bigger Shift Ahead

Instagram has rolled out a small but long overdue feature that users have been asking for years. You can now edit your comments after posting them. This simple change solves a very real frustration. Until now, fixing even the smallest typo meant deleting your comment and writing it all over again. That friction is finally gone. But there is a boundary. You get a 15 minute window after posting to make edits. Within that time, you can update your comment as many times as you want. There is also a layer of transparency built in. Once a comment is edited, others will be able to see that it has been modified. However, unlike platforms such as iMessage, Instagram does not show the edit history. What was originally written stays hidden.

The bumping heads with Piccioli moment went viral and I loved that it did. Two polished, composed people having a totally normal awkward human moment. That is the stuff that makes public figures actually likable.

The mix of feminine and edgy elements in this outfit is absolutely perfect. I'm saving this for inspiration!