This is exactly what I was afraid of when they announced the Jackson estate was involved in production. Every cloying scene has their fingerprints all over it.

Natalie_55

Speaking from experience training combat sports, the way The Boxer renders fear before a fight is more accurate than most fiction written about it. That specific dread is almost impossible to communicate and JH somehow does it visually.

Making a claim about any single series having the absolute best anything in a medium this large and varied is going to invite pushback and it should. Nano Machine is exceptional. Best ever is a stretch.

The marketing agency use case is the one I keep seeing underreported. Agencies are the hidden power users here. They are running HeyGen accounts behind dozens of client brands and clients have no idea.

The article makes the ROI case almost entirely on cost reduction. That is the right argument for procurement but it is the wrong framing for learning strategy. We should be asking whether people are actually better at their jobs afterward.

Edit Video By Deleting Words Like You're Fixing A Google Doc

Most people can edit a Google Doc. Delete some words, rearrange sentences, fix typos, add paragraphs. It's intuitive and requires no special training. Now imagine editing video the same way. That's Descript's core innovation, and it transformed video editing from a specialized skill requiring expensive software into something anyone who can edit text can do effectively. Descript started as a transcription tool for podcasters. Record your podcast, upload it to Descript, and get an accurate transcript for show notes. But the founders realized something bigger. If you have a perfect transcript synchronized to audio, you can edit the audio by editing the text. Delete a word from the transcript and that word disappears from the audio. That insight became the foundation for a complete editing platform.

To the person saying regression is overdone, I get it, but the specific angle here is different. Most regression protagonists go back to improve their personal standing. Bigang goes back to prevent a planetary apocalypse nobody else believes is coming. That changes the whole dynamic.

The 128k context window thing is real but prompt quality still matters a lot. Feeding v0 your entire design system and getting back something coherent requires thoughtful prompting, not just dumping files and hoping for magic.

Voice cloning consistency is the sleeper feature here. Personal brand recognition is built on voice as much as face, and being able to maintain that audio identity across hundreds of videos without recording each one is actually kind of profound.

Speaking from experience in L and D: the governance thing is not just corporate box-ticking. When you have 50 people creating training videos, brand consistency and content approval matter enormously. HeyGen was not really built for that workflow and it shows when teams scale past ten users.

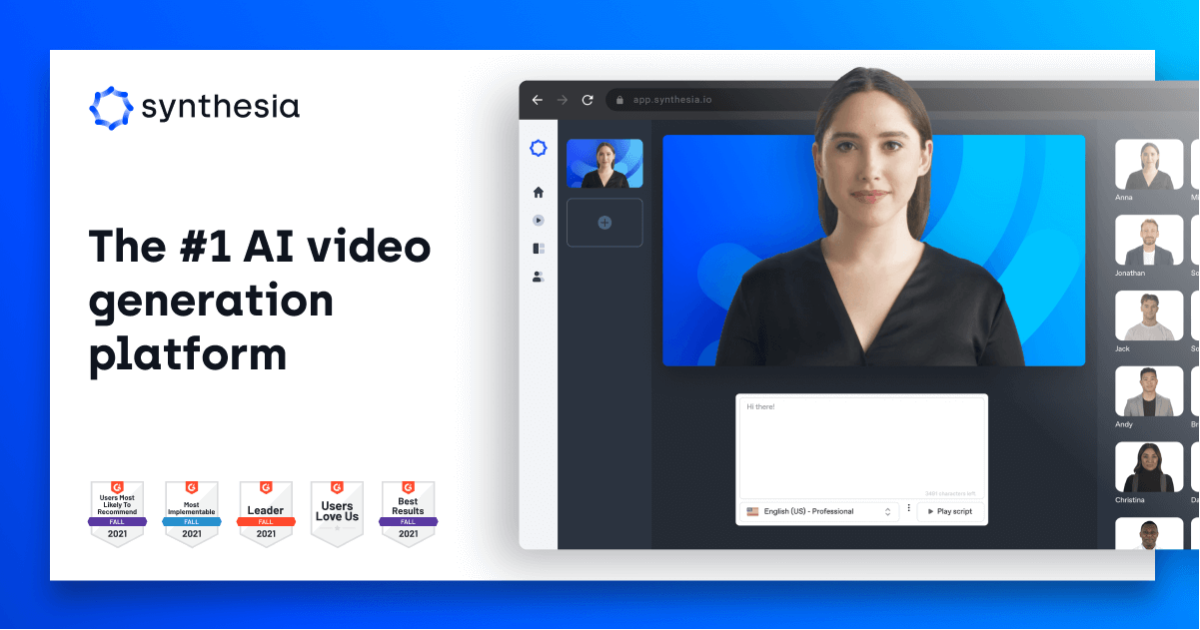

$200M Funding And 140 Languages Later, Your Training Videos Write Themselves

When a company raises $200 million in Series E funding during January 2026, investors are betting on more than potential. They're backing proven market demand and sustainable growth. Synthesia's funding round came alongside a 44% year-over-year increase in headcount to 706 employees, signaling aggressive expansion in a category the company essentially created: AI avatar-based video generation for enterprise training and communications. Corporate training videos have been expensive and slow to produce for decades. Recording a single 10-minute training module traditionally required booking a studio, hiring a presenter, scheduling a videographer, managing multiple takes, and editing everything together. If you needed to update information or translate content, you essentially started over. Synthesia eliminated this entire production workflow by replacing human presenters with AI avatars.

What happens to the Ray-Ban glasses when this rolls out to the hardware ecosystem? That is where the ambient AI angle gets genuinely interesting and genuinely creepy in equal measure.

Anthropic's Strategic Shift: The Race To Control AI Computing Infrastructure

The artificial intelligence industry is entering a new phase of competition, one that extends far beyond the development of advanced language models and neural networks. Companies are now engaged in an intense struggle to secure the computational infrastructure necessary to train and deploy their AI systems. In this context, Anthropic has reportedly begun exploring the possibility of designing and manufacturing its own specialized processors to power Claude, its flagship conversational AI platform, along with its broader suite of artificial intelligence technologies. This strategic consideration emerges at a critical moment in the global AI sector. The exponential growth in model complexity and capability has created unprecedented demand for high-performance computing resources. Sources familiar with the matter indicate that Anthropic is conducting feasibility studies to determine whether developing proprietary semiconductor technology could reduce its dependence on external hardware vendors while ensuring reliable access to the computing power required for its operations.

Whether you like her or not, the level of scrutiny applied to every physical gesture she makes in public is exhausting to observe. Let the woman watch a runway show.

I actually prefer this with the coat belted rather than open. It creates such a beautiful shape!

The coral lipstick really pulls it all together. Such an unexpected but perfect match with the yellow