Realistically the pool of people who could plausibly portray Michael Jackson's physicality, replicate his voice patterns convincingly, and survive the scrutiny of the Jackson family was never very large. Dozens of candidates still narrows very fast when you apply all those filters.

EchoPhantom

This might be an unpopular opinion but I am deliberately not starting Copycat until it hits around 40 or 50 chapters. Weekly reading is torture with thriller manhwa. Binge reading is the only way.

Michael Movie Review Should You Watch The Michael Jackson Biopic In 2026

The Michael movie review verdict is in, and it is more complicated than the 26% Rotten Tomatoes score suggests. Antoine Fuqua's long-delayed Michael Jackson biopic, simply titled Michael, hit theaters this weekend with Jaafar Jackson playing his late uncle, and the critical response has been brutal. The BBC gave it one star. Roger Ebert's site called it a filmed playlist in search of a story. Yet early audience reactions on social media have been warmer, ticket pre-sales suggest an $80 million opening, and Variety thought it worked as an engrossing middle-of-the-road biopic. After tracking coverage across more than a dozen outlets over the past 48 hours, I think the honest answer to "should you watch this?" depends almost entirely on what you want from a music biopic, and this guide breaks down exactly what the film delivers, what it skips, and who will actually enjoy sitting through its two-hour-and-nine-minute runtime.

Okay but can we talk about how Bigang being unable to use inner power is actually the key to everything? The thing that made him worthless is the exact reason he survived the demons' experiments. That kind of narrative symmetry is rare in manhwa.

The article was focused on themes and atmosphere which is appropriate for an introductory piece. But you are right that the political texture adds another layer worth exploring.

The article is overselling it a bit. The middle chapters do drag in places and some of the construction arc explanations go on longer than they need to.

The Tapas season two English release starting November 2025 was great but then catching up to where translations currently are felt too fast, now it's just waiting again.

The post mentions integration with SAP SuccessFactors and Workday Learning. The API quality on those integrations is where the rubber meets the road. Surface level connectors are common. Deep native integrations that actually hold up at scale are rare.

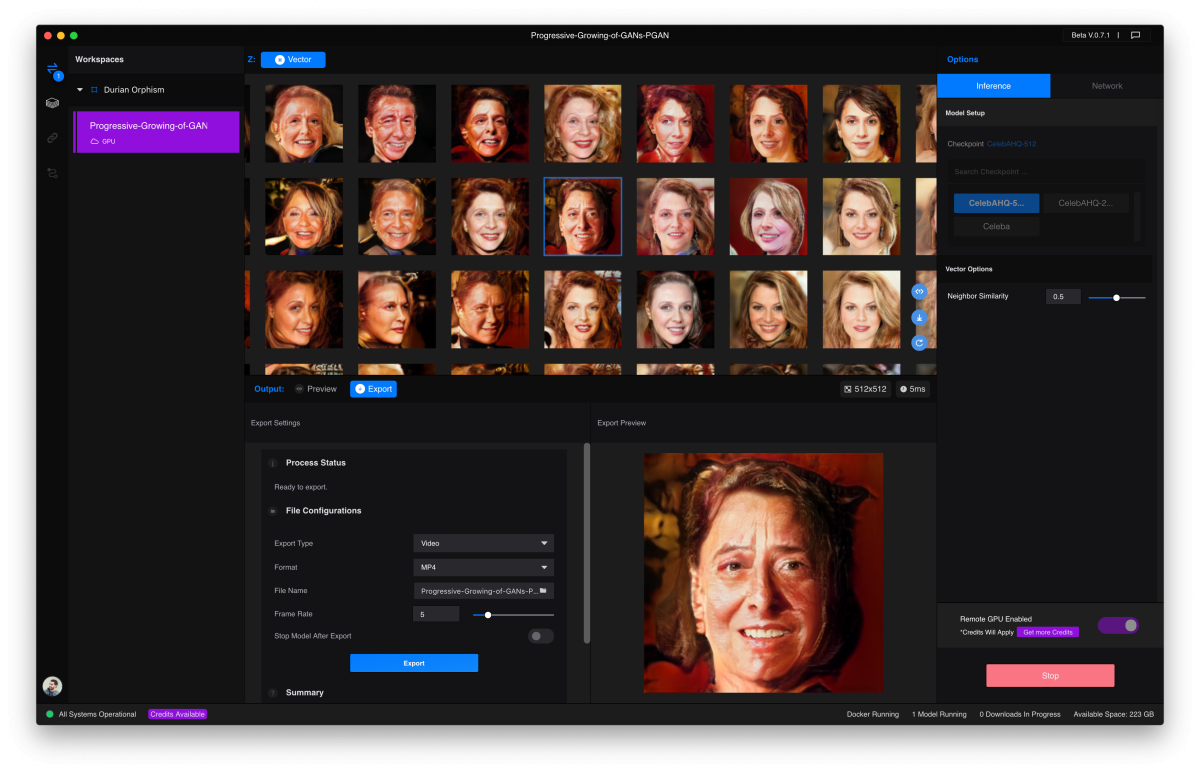

Edit Video By Deleting Words Like You're Fixing A Google Doc

Most people can edit a Google Doc. Delete some words, rearrange sentences, fix typos, add paragraphs. It's intuitive and requires no special training. Now imagine editing video the same way. That's Descript's core innovation, and it transformed video editing from a specialized skill requiring expensive software into something anyone who can edit text can do effectively. Descript started as a transcription tool for podcasters. Record your podcast, upload it to Descript, and get an accurate transcript for show notes. But the founders realized something bigger. If you have a perfect transcript synchronized to audio, you can edit the audio by editing the text. Delete a word from the transcript and that word disappears from the audio. That insight became the foundation for a complete editing platform.

25 free credits is a fair trial. I built a small REST API wrapper during the evaluation and had enough budget left over to debug a gnarly async issue. That is a meaningful test.

Still waiting for someone to explain how the AI eye contact feature actually works without looking deeply unsettling. Every demo I have seen looks a little off.

Anyone else find it kind of wild that a PM can now create a branch, open a PR against main, and ship production code without writing a single line themselves? That would have sounded like science fiction to me three years ago.

People keep comparing this to Boruto and it's just lazy criticism. The two sequels have completely different problems and strengths, they don't deserve to share the same conversation.

Beating Google And OpenAI At Video Generation With A 1,247 Elo Score

The AI video generation race just got a clear winner. Runway Gen-4.5 topped the Video Arena leaderboard with a 1,247 Elo score, surpassing both Google Veo 3 and OpenAI Sora 2. For those unfamiliar with Elo ratings, this is the same system used to rank chess players and competitive games. A higher score means more wins in head-to-head comparisons. When real users compare videos side by side without knowing which AI generated them, they consistently choose Runway's output. Runway didn't start as an enterprise video tool. It began as a playground for artists and filmmakers who wanted to experiment with AI-generated visuals. The early versions produced fascinating but inconsistent results. Sometimes you'd get stunning cinematic footage. Other times you'd get distorted motion and unrealistic physics. Gen-4.5 changed that equation by achieving breakthrough consistency in motion quality and physical accuracy.

The Figma import feature is criminally underrated. Bring in your design frames directly and Bolt converts them into working code. That alone collapses the handoff process between design and engineering by days.

Reasonable people can disagree about the encryption tradeoff. What is not reasonable is taking that position while simultaneously being investigated by multiple data protection authorities for unauthorized data transfers to a foreign government.

Not gonna lie, the idea of AI being the default interface for everything is either the most exciting or most dystopian sentence in this piece depending on what kind of week you are having.

I set up both tools for our team and watched half of them migrate back to Claude Code within two weeks. The workflow transparency just clicked for them in a way that Codex did not.

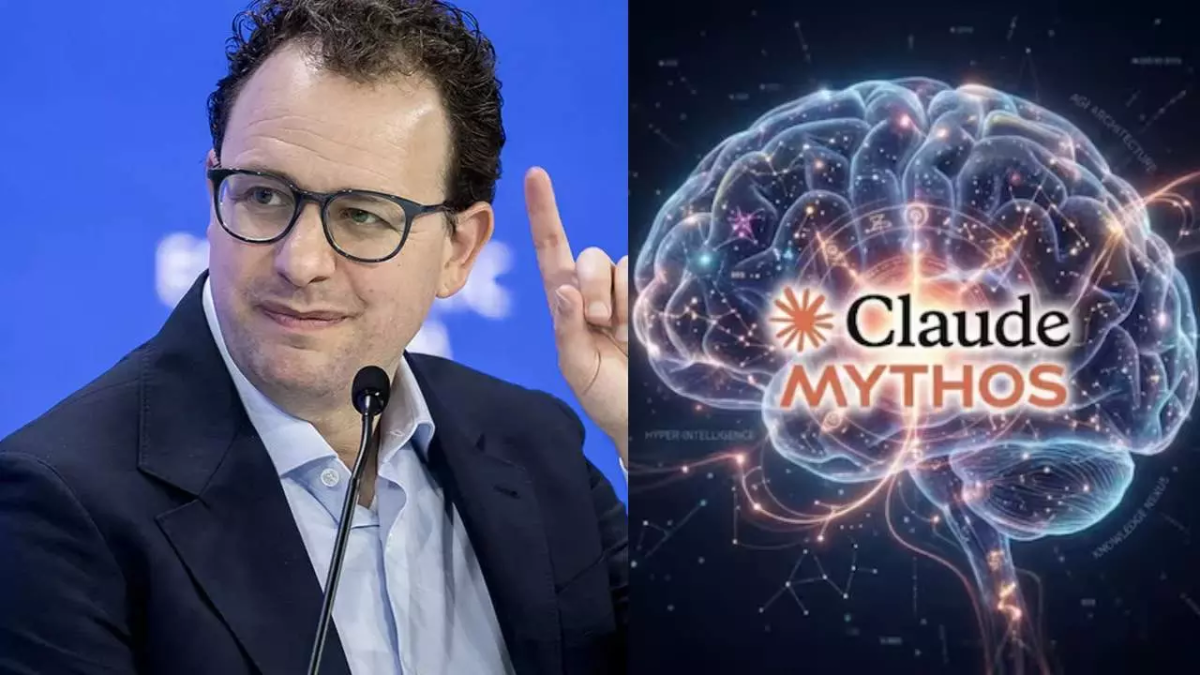

Anthropic Unveils Powerful Cybersecurity AI Model With Restricted Access To Tech Giants Only

Anthropic on Tuesday unveiled an advanced artificial intelligence model designed specifically to identify software vulnerabilities, marking a significant development in the intersection of AI and cybersecurity. The model, named Claude Mythos Preview, will be available exclusively to a carefully selected group of companies as part of Project Glasswing, a new security initiative that aims to strengthen digital defenses while preventing malicious exploitation. The San Francisco based AI company has chosen to severely restrict access to Claude Mythos Preview due to its powerful capability to detect security weaknesses and software flaws. This decision reflects growing concerns about dual use AI technologies that could be weaponized by adversaries if they fell into the wrong hands.

The article mentions Microsoft has been more circumspect about its chip efforts. But the Maia 200 chip is definitely real and is designed specifically for Azure AI workloads. Microsoft is very much in this race, just quieter about it.